There are many ways of splitting a word into subwords. For example, the suffix -shire lets you guess that Melfordshire is probably a location, or the suffix -osis that Myxomatosis might be a sickness. Subword approaches try to solve the unknown word problem differently, by assuming that you can reconstruct a word's meaning from its parts. A makeshift solution is to replace such words with an token and train a generic embedding representing such unknown words.

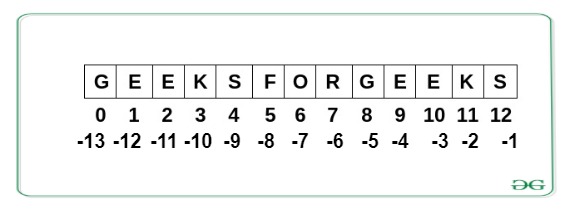

If you are using word embeddings like word2vec or GloVe, you have probably encountered out-of-vocabulary words, i.e., words for which no embedding exists. What are subword embeddings and why should I use them? The embed method combines encoding and embedding lookup: > emb_layer(tensor(ids)).shape # in PyTorch To perform an embedding lookup, encode the text into byte-pair IDs, which correspond to row indices in the embedding matrix: > emb_layer = nn.om_pretrained(tensor(bpemb_zh.vectors)) Use these vectors as a pretrained embedding layer in your neural model. The actual embedding vectors are stored as a numpy array: # īPEmb objects wrap a gensim KeyedVectors instance: > type(bpemb_zh.emb) > bpemb_zh.encode("这是一个中文句子") # "This is a Chinese sentence." Load a Chinese model with vocabulary size 100,000: Pre-trained byte-pair embeddings work surprisingly well, while requiring no tokenization and being much smaller than alternatives: an 11 MB BPEmb English model matches the results of the 6 GB FastText model in our evaluation.In this case the BPE segmentation might be something like melf ord shire. Byte-Pair Encoding gives a subword segmentation that is often good enough, without requiring tokenization or morphological analysis.

E.g., the suffix -shire in Melfordshire indicates a location.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. Archives

December 2022

Categories |

RSS Feed

RSS Feed